About confidence interval interpretation. Motivated by the results of this poll

[Thread]

(1/16) https://twitter.com/MaartenvSmeden/status/1058401290936635392">https://twitter.com/MaartenvS...

[Thread]

(1/16) https://twitter.com/MaartenvSmeden/status/1058401290936635392">https://twitter.com/MaartenvS...

Most of us have probably been taught about 95% confidence intervals and their interpretation in rather vague terms. Such as "the limits reflecting the uncertainty in the parameter estimate" or "95% confident about the true value of the parameter"

(2/16)

(2/16)

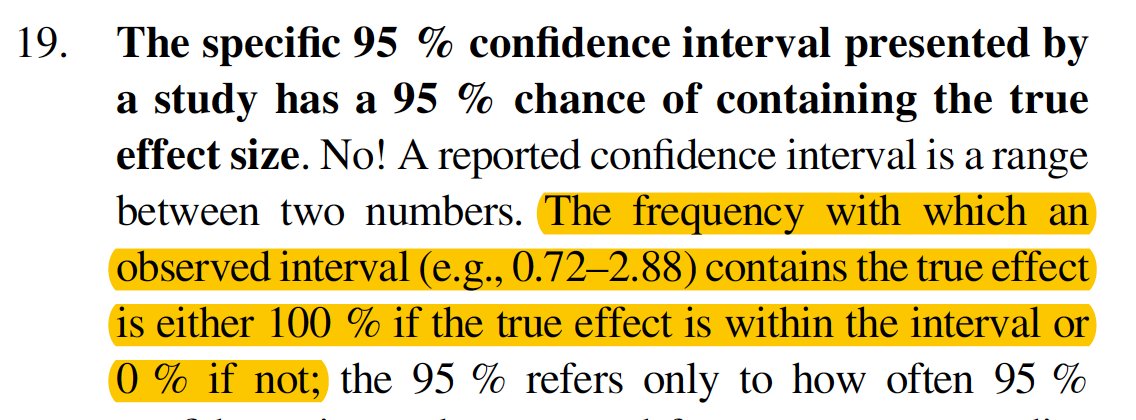

You may also remember the warning that usually comes with it: 95% *confidence* doesn& #39;t mean 95% *probability* that the true value is actually in the confidence interval

(3/16)

(3/16)

Nothing wrong so far. All true. In fact, the only thing we know for sure* is that 95% of confidence intervals contain the true value of the parameter 95% of the time.

*actually this only true if all the assumptions used to compute the intervals were correct

(4/16)

*actually this only true if all the assumptions used to compute the intervals were correct

(4/16)

The chance that any particular confidence interval contains the true value is an all or nothing game, as this great paper explains: https://link.springer.com/article/10.1007/s10654-016-0149-3

(5/16)">https://link.springer.com/article/1...

(5/16)">https://link.springer.com/article/1...

Does that sounds like playing with words? Okay, but stay with me for a few more seconds

(6/16)

(6/16)

If you have ever come across Bayesian inference, you probably know that their alternative to the confidence interval is the so-called credible interval that *does* come with the 95% *probability* that the true value is in the interval interpretation

(7/16)

(7/16)

Problem solved, right? Just go Bayesian. Well, maybe, but it might not be that easy. To get a credible interval around a single parameter estimate (say, a regression coefficient) we have to predefine a so-called prior distribution for that coefficient

(8/16)

(8/16)

Prior distributions are probability distributions that define - before looking at the data - how likely you belief/think/expect different values for the parameters are

(9/16)

(9/16)

It doesn& #39;t have to be *that* complicated. We can just say that for a very large range of values all values are equally likely. This is sometimes called a "flat prior" or "uninformative prior"

(10/16)

(10/16)

For simple situations, the Bayesian analysis with such a flat prior will give a credible interval with an upper and lower limit that is numerically equivalent to the standard confidence interval ("frequentist") analysis

(11/16)

(11/16)

Hey! That means I can interpret my confidence interval as a credible interval with a flat prior? Yes and no

(12/16)

(12/16)

The problem lies in assuming a flat prior. Unfortunately, the flat prior is often an unrealistic prior in the life sciences. As my colleague Erik van Zwet has recently worked out: https://github.com/ewvanzwet/default-prior

(13/16)">https://github.com/ewvanzwet...

(13/16)">https://github.com/ewvanzwet...

In fact, he shows that to get a confidence interval that approximately has a probability interpretation you have to divide the parameter estimate by 2 and the estimated standard error by the square root of 2 (see his GitHub  https://abs.twimg.com/emoji/v2/... draggable="false" alt="👆" title="Up pointing backhand index" aria-label="Emoji: Up pointing backhand index"> for more info)

https://abs.twimg.com/emoji/v2/... draggable="false" alt="👆" title="Up pointing backhand index" aria-label="Emoji: Up pointing backhand index"> for more info)

(14/16)

(14/16)

Yes, this will get you a completely different interval than simply calculating the confidence interval

(15/16)

(15/16)

The bottom line is this: to be able to justify saying that there is a 95% *probability* that the true value is within a particular interval, you may have to do some extra calculations. The standard confidence interval doesn& #39;t come with a 95% probability interpretation

(16/16)

(16/16)

Read on Twitter

Read on Twitter